Analyze SERP Backlink Profiles in Bulk for SEO Using Python

Analyze SERP Backlink Profiles in Bulk for SEO Using Python The complexity of SEO strategy demands a sophisticated proficiency with tools to mine, analyze, and implement data. […]

Analyze SERP Backlink Profiles in Bulk for SEO Using Python

The complexity of SEO strategy demands a sophisticated proficiency with tools to mine, analyze, and implement data.

Among the myriad of tasks an SEO professional tackles, competitor backlinks analysis is critical.

Python, a versatile programming language, proves beneficial for executing SEO tasks, notably extracting competitor backlinks.

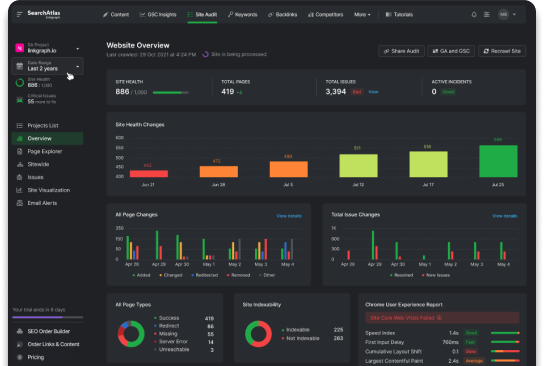

Search Atlas by LinkGraph is among the dependable tools that streamline link building using Google compliant methods.

In this exciting read, you’ll discover how Python and dependable backlinks tools like Search Atlas can redefine your strategy for SEO and solidify your brand authority.

Key Takeaways

- Python is a valuable tool for backlink analysis in SEO, enabling data collection, cleaning, and analysis.

- Web scraping tools like BeautifulSoup and Scrapy can be used with Python to extract data from websites and analyze backlink quality and volume.

- Python can be used in website experiments to gather data on competitor backlinks and optimize SEO strategies.

- Python can automate website speed checks and optimize webpage loading times using tools like Google’s Lighthouse.

- Setting up API variables in Python allows for interaction with APIs and access to valuable SEO data for analysis and strategy formulation.

Understanding Data Import and Cleaning

When embarking on the process of Python-assisted backlink analysis, the first stage often involves the accumulation of the required data. Utilizing Python’s ‘import requests’ functionality allows for bulk data retrieval. This can provide a comprehensive view of backlink data, which is essential in executing responsible SEO tasks.

Following data collection, cleaning your data is an equally critical process in Python-aided SEO analysis. It often involves the removal of unwanted observations, fixing structural errors, handling missing data, and filtering unnecessary outliers.

Adopting such practices ensures that the insights derived from the data are both accurate and reliable.

When processing backlink data, pandas DataFrames serve as a powerful tool, simplifying your data analysis. Using Python, the ‘pd import’ function is used to compile the backlink data into a structured DataFrame, easing manipulation.

This offers a seamless way to manage collecting data, especially when dealing with vast backlink profiles.

It’s also worth noting that programming languages such as Python allow for the selective extraction of valuable data from searches. BeautifulSoup and lxml are two prominent tools that enable web scraping, capturing HTML elements from a target URL.

This capability aids in understanding the SEO contribution of individual domains, enriching the overall campaign for SEO with data-led decisions.

Analyzing Link Quality and Volumes

When it comes to deep-dive backlink research, one must not overlook the significance of analyzing link quality. Search Atlas by LinkGraph is remarkably equipped for this task, providing high-quality backlink generator services.

Their backlink analyzer tool enables the identification of top-notch link opportunities that are Google compliant, bringing a higher return on SEO efforts.

Data analysis is a critical component of determining the quality of backlinks. Web scraping tools like Scrapy and BeautifulSoup assist in retrieving key data points like domain rating, anchor text, and target URL.

This information provides a snapshot of the backlink’s potential value, assisting in structuring a more effective strategy for SEO.

In parallel to link quality, the volume of backlinks – or backlink count – is another crucial aspect of backlink profiling. A substantial link count can drive search traffic. Yet, adopting a python-oriented approach allows for understanding whether the volume comes from diverse sources, leading to a robust and well-balanced link profile.

Furthermore, drawing insights from link volumes using Python can help discern and map trends over time. This can pinpoint specific time frames where backlink generation efforts yielded remarkable results.

Such evidence-backed findings can further fine-tune the SEO campaign towards increased efficacy.

Website Experiments: How and Why

Initiating website experiments is an imperative facet of SEO optimization, providing insights grounded in data. Python proves highly effective in this aspect, enabling the employment of crawler applications such as Scrapy.

Website experiments provide real-time feedback on the efficacy of the SEO strategy in place. Website experiments can also be conducted using Python’s web scraping tools to gather data from the HTML elements of competitor websites.

Understanding how a competitor’s backlinks are performing can help businesses to tailor their SEO approach more effectively, focusing on areas that drive results. The collected data from these experiments can then be assembled into DataFrames using ‘pd import,’ simplifying data analysis.

It enables the extraction of vital metrics such as domain authority, link count, and brand authority. This information can provide a clear benchmark for the effectiveness of the current SEO campaign. Lastly, website experiments can also facilitate regular SEO audits.

Using Python to extract backlink data in bulk, businesses can monitor the performance over time to evaluate if any adjustments are necessary continuously. This approach allows for an agile response to the ever-changing search engine landscape.

Using Google Lighthouse for Website Speed Testing

Site speed is a vital determinant of the user experience and search engine ranking. Python can be used with Google’s Lighthouse tool to optimize webpage loading times efficiently. Lighthouse provides detailed speed metrics for businesses to analyze and improve.

Integrating Python with Lighthouse allows SEO professionals to access and process complex speed data effectively. This combination helps identify speed pitfalls that may harm search engine scores.

Python can automate regular website speed checks, ensuring high rates of speed are maintained. This is particularly valuable for larger websites where manual testing would be impractical.

Lighthouse reports also provide recommendations for improving website performance. Integrating these suggestions with Python’s data analysis capabilities can help create an action plan for speed optimization.

This combined approach is a robust strategy for improving search traffic.

Setting up API Variables: A Step by Step Guide

Setting up API variables is crucial in executing Python-assisted SEO tasks. These variables enable Python scripts to interact with APIs, granting access to valuable SEO data from search engines.

Python’s ‘requests’ library is commonly used to set API variables and make API calls. The response received from these calls is typically in JSON format, making it easy to analyze the data.

Here is a simplified step-by-step guide for setting up API variables:

1. Import the requests library in Python.

2. Define the API endpoint.

3. Specify the target URL or resource.

4. Add any necessary request parameters, such as search keywords.

5. Make the API call and retrieve the response.

6. Parse the response into a JSON object.

Once the response is transformed into a JSON object, Python tools like pandas can be utilized to analyze the data. The insights gained from this analysis can then inform the brand’s link strategy, optimizing their SEO campaign for improved search traffic.

Making an Effective SERP API Call

An effective SERP API call is critical for python-assisted backlink analysis. These calls provide valuable data from search engine result pages that can be utilized to construct an efficient SEO strategy.

While Python makes SERP API calls simple, a few key steps should be followed to ensure maximum benefits.

Follow these steps to make an effective SERP API call: Specify the search engine to use; Define the search query; Set the geographical location to tailor the SERP data to a specific demographic; Determine the data type to be retrieved, be it organic results, paid results, or local search.

Upon successful API calls, the received response should preferably be converted to a parseable format such as a JSON object or a pandas DataFrame. This simplification of SERP data allows for seamless integration into existing SEO workloads.

Parsing also enables easier data manipulation and more in-depth data analysis.

Notably, an effective SERP API call should strike a balance between the volume of data retrieved and its relevance for the SEO campaign. Discerning this balance requires an understanding of the brand’s SEO goals and objectives, coupled with proficient Python programming skills.

This ensures that the data retrieved carries actionable insights that can drive an SEO campaign to success.

Insights from Processing SERP Result URLs

The processing of URLs from SERP results provides valuable insights for a successful SEO campaign. Python plays a central role, parsing the SERP data and analyzing the resultant URLs.

Web scraping tools like BeautifulSoup and lxml, used with Python, allow for the extraction of data from high-ranking web pages. This data can include keyword strategy, link count, and content structure – valuable insights for directing an SEO strategy.

Processing SERP result URLs helps understand where competitors acquire backlinks from, revealing potential link opportunities.

Python can classify links from these URLs, distinguishing between follow and no-follow links, anchor text used, and the value of the domain providing the backlink. This analysis guides strategic link building to boost domain authority and search engine rankings.

Detailing Backlink Count and URL Rank Analysis

In the world of SEO, understanding the relationship between a URL’s rank and backlink count is essential. Empowered with Python, SEOs can collect, analyze, and interpret related data useful in formulating their SEO strategy.

Python serves as a tool to fetch extensive lists of backlinks attached to any URL. It provides the ability to organize different domains based on their link count, which can be indicative of a webpage’s popularity and authority.

Analyzing the link count for top-ranking URLs can disclose how many backlinks might be needed to compete effectively. This data is vital in outlining an effective link-building strategy to boost search engine rankings.

Identifying patterns within the backlink data for high-ranking URLs can also unearth correlations between specific backlink features and ranking. This can include factors such as anchor text optimization, the value of linking domains, and relevance to target keywords.

Such insights can be instrumental in formulating a data-driven, competitive strategy for SEO.

Exploring Anchor Text: Usage and Importance

Anchor text, the clickable text in a hyperlink, is a powerful yet often overlooked SEO tool. Proper utilization of anchor texts can upraise a webpage’s SEO score, affecting both the site’s overall domain authority and the linked page’s relevancy.

In SEO backlink analysis, understanding the usage and role of anchor text within top-ranking URLs provides valuable insight. Particularly, using Python to parse and analyze this text brings forward the interplay between anchor text and a page’s ranking.

Here are some steps for exploring anchor text using Python:

1. Extract the backlink data of the URL of interest.

2. Use Python’s BeautifulSoup or lxml for web scraping to retrieve the anchor text.

3. Conduct a frequency analysis of the anchor text to identify patterns.

4. Compare the anchor text patterns of high-ranking URLs to the current anchor text strategies.

Knowing the patterns in the utilization of anchor text illuminates effective strategies, providing a distinct operational advantage.

Armed with this knowledge, SEOs can better tailor their anchor text strategy, focusing on optimizing keyword placement within anchor texts and ensuring maximum relevancy. This can lead to improvements in both user experience and SERP rankings.

Finding Top Anchor Text By Backlinks: Techniques and Tools

Top anchor text by backlinks offers a snapshot of the most effective phrases in driving traffic. Python allows for the extraction and analysis of such data, guiding SEO professionals towards crafting impactful backlink strategies.

Web scraping tools like BeautifulSoup and Scrapy, in conjunction with Python, can retrieve and process anchor text from backlinks. This can be accomplished by targeting ‘href’ HTML elements that contain the hyperlink text.

Once the raw anchor text data is compiled, Python’s text processing capabilities come into play. By isolating the most common phrases used in anchor texts, SEO professionals can discern link building patterns that are successful in driving search traffic.

Such techniques and tools offer a competitive edge in understanding SEO dynamics. By identifying top anchor text by backlinks, one can gain deeper insight into their SEO strategy’s current effectiveness, potential areas of improvement, and new opportunities for optimization.

Conclusion

Analyzing SERP backlink profiles in bulk using Python is a potent approach to SEO.

It gives businesses an in-depth understanding of their SEO effectiveness, competitor strategies, and industry trends.

Python offers robust tools and facilities for extracting, processing, analyzing, and visualizing this critical data.

With Python, businesses can automate time-consuming tasks, uncover rich SEO insights, and make data-backed decisions to improve their search rankings.

Leveraging Python for SEO analysis is, therefore, an investment worth making for businesses aiming to strengthen their digital presence while undertaking strategic, outcome-oriented SEO.